Learning Video Object Segmentation with Visual Memory

Abstract

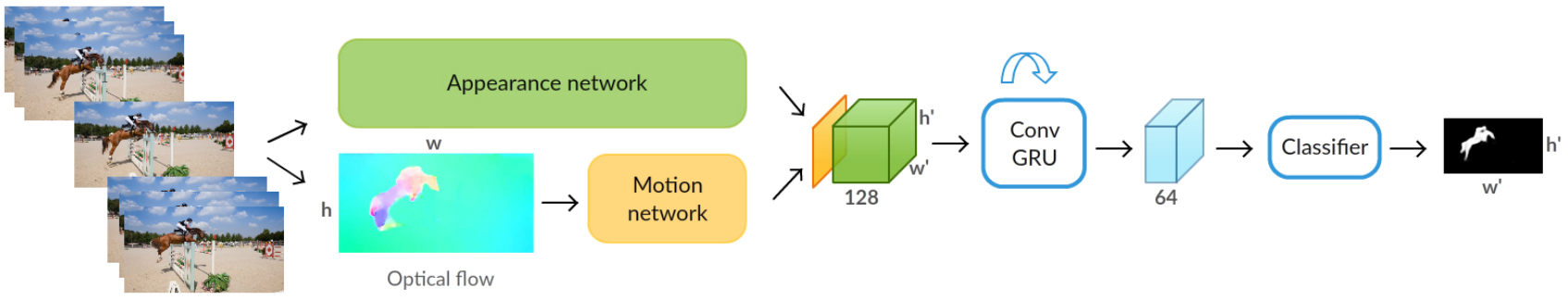

This paper addresses the task of segmenting moving objects in unconstrained videos. We introduce a novel two-stream neural network with an explicit memory module to achieve this. The two streams of the network encode spatial and temporal features in a video sequence respectively, while the memory module captures the evolution of objects over time. The module to build a "visual memory" in video, i.e., a joint representation of all the video frames, is realized with a convolutional recurrent unit learned from a small number of training video sequences. Given a video frame as input, our approach assigns each pixel an object or background label based on the learned spatio-temporal features as well as the "visual memory" specific to the video, acquired automatically without any manually-annotated frames. The visual memory is implemented with convolutional gated recurrent units, which allows to propagate spatial information over time. We evaluate our method extensively on two benchmarks, DAVIS and Freiburg-Berkeley motion segmentation datasets, and show state-of-the-art results. For example, our approach outperforms the top method on the DAVIS dataset by nearly 6%. We also provide an extensive ablative analysis to investigate the influence of each component in the proposed framework.

Paper

BibTeX

@InProceedings{Tokmakov17,

author = "Tokmakov, P. and Alahari, K. and Schmid, C.",

title = "Learning Video Object Segmentation with Visual Memory",

booktitle = "ICCV",

year = "2017"

}

Code

The code and trained models are available under this link.

See the details in the README file.

The code and trained models for the extended journal version of the paper are available here.

Acknowledgements

This work was supported in part by the ERC advanced grant ALLEGRO, a Google research award, a Facebook and an Intel gift. We gratefully acknowledge NVIDIA's support with the donation of GPUs used for this work.

Copyright Notice

The documents contained in these directories are included by the contributing authors as a means to ensure timely dissemination of scholarly and technical work on a non-commercial basis. Copyright and all rights therein are maintained by the authors or by other copyright holders, notwithstanding that they have offered their works here electronically. It is understood that all persons copying this information will adhere to the terms and constraints invoked by each author's copyright. This page style is taken from Guillaume Seguin.